MET News

04th February 2026

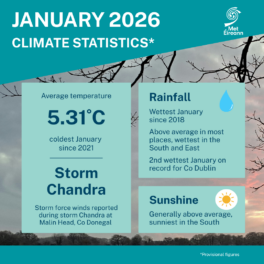

Climate Statement for January 2026

Cool, very wet in the South and East January 2026 ... more

29th January 2026

New Masters in Artificial Intelligence for Weather and Climate Change launched

A new MSc programme co-delivered by Met Éireann a... more

13th January 2026

Met Éireann presents two awards at action-packed Stripe YSTE

Met Éireann was once again delighted to take part... more

06th January 2026

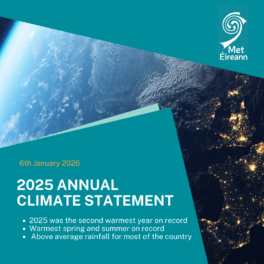

Annual Climate Statement for 2025

Second warmest year on record with above average r... more

05th January 2026

Met Éireann Internship Opportunities

Met Éireann Internship Opportunities Launch your ... more

05th January 2026

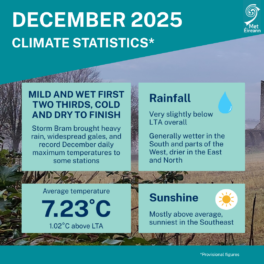

Climate Statement for December 2025

Mild and wet first two thirds, cold and dry final ... more

24th December 2025

Met Éireann Aviation forecast for Santa, Christmas Eve 2025

The countdown is on until Santa sets off on his s... more

23rd December 2025

Met Éireann partners in Polarstern expedition

Met Éireann climate scientist and researcher, Dr ... more

10th December 2025

New study links climate change to increased rainfall and flood risk

A new rapid attribution study from Maynooth Univer... more

03rd December 2025

Climate Statement for Autumn 2025

Mild and very wet Meteorological Autumn 2025 was t... more

02nd December 2025

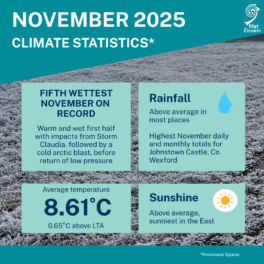

Climate Statement for November 2025

Wet overall. Very mild first half, cooler second h... more

14th November 2025

Met Éireann team out in force for Science Week 2025

Science Week 2025 has been a busy period for the M... more

04th November 2025

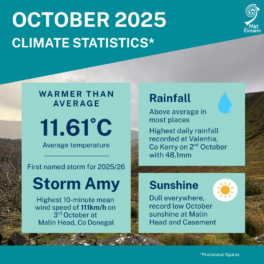

Climate Statement for October 2025

Mild, dull and wet overall October 2025 was a mil... more

29th October 2025

Met Éireann and Teagasc team up to host educational webinar

Met Éireann and Teagasc have joined forces to org... more

24th October 2025

WMO Congress drives momentum for ‘Early Warnings For All’

‘Early Warnings for All’ was the primary topic... more

23rd October 2025

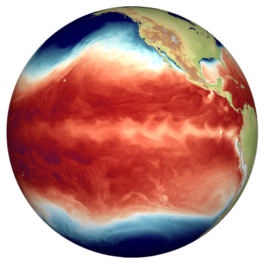

Met Éireann contributes to publication on future development of El Niño and its relevance to Europe

A collaborative study on the climate phenomenon kn... more

14th October 2025

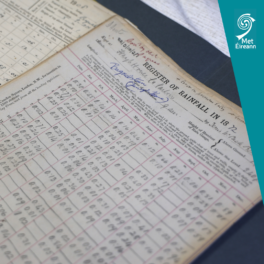

Met Éireann opens historic weather transcription project to all

Members of the public are invited to become citize... more

08th October 2025

Met Éireann Wins national climate change leadership award

Met Éireann has been named Public Sector Organisa... more